To use the static version of the application hosted on Heroku, click here. (Might take some time to load)

For the GitHub repository of this project, click here.

Summary

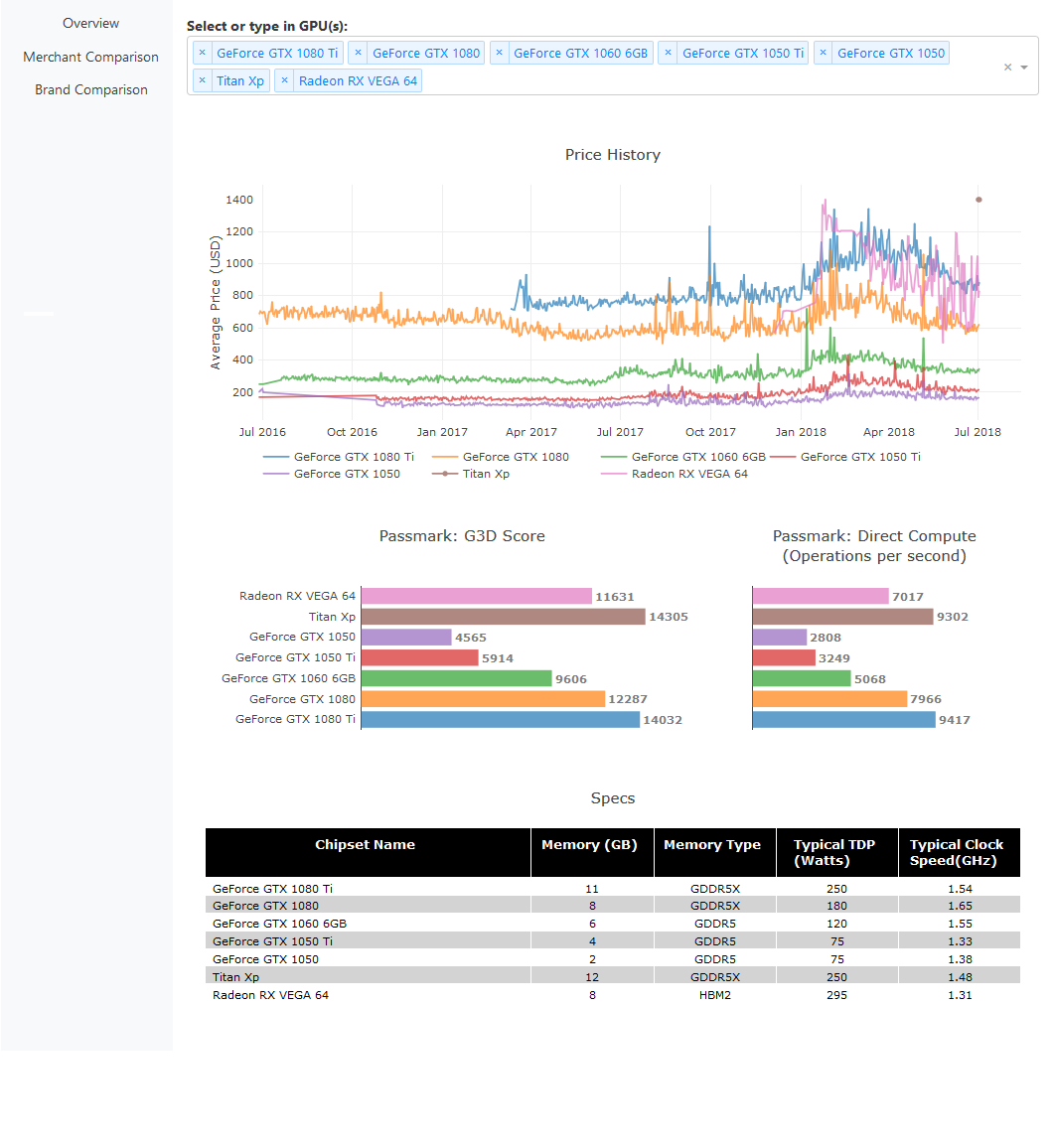

The purpose of this project is create a web application that allows users to easily compare prices and specifications of popular GPUs. There are many websites that tries to accomplish this task. However, the majority of these websites are overloaded with ads and links to their affliates. Or they overwhelm you with information you don't really care about. I wanted to create something that can condenses the data down into a simple interface. I also wanted to provide something that most websites lack, and that is the price history.

Motivation

As a hardcore gamer/PC builder myself, I want to answer the following questions:

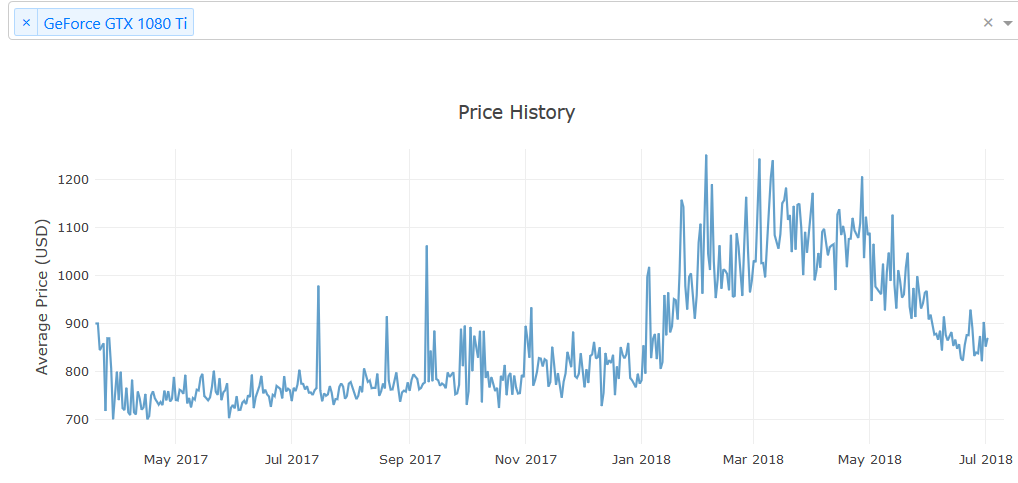

This question is harder to answer because no one really knows when the next series of GPU is coming out. However, we can look at the price-performance history and try to look for trends there. For example, the price of a GTX 1080 TI is actually more expensive today (7/1/2018) than it was when it first came out (3/11/2017).

Web scraping and the Back-End

The back-end for this project is a SQLite database currently stored in Digital Ocean, a cloud VPS provider. It is currently being served using Apache and mod_wsgi through Cloudflare. I wrote a Python script to scrape data from PCPartPicker and PassMark using BeautifulSoup. This script runs itself daily at 00:10 Pacific time. The database was designed to be as small as possible (~5 MB) so it is lightweight and fits even into a GitHub repo.

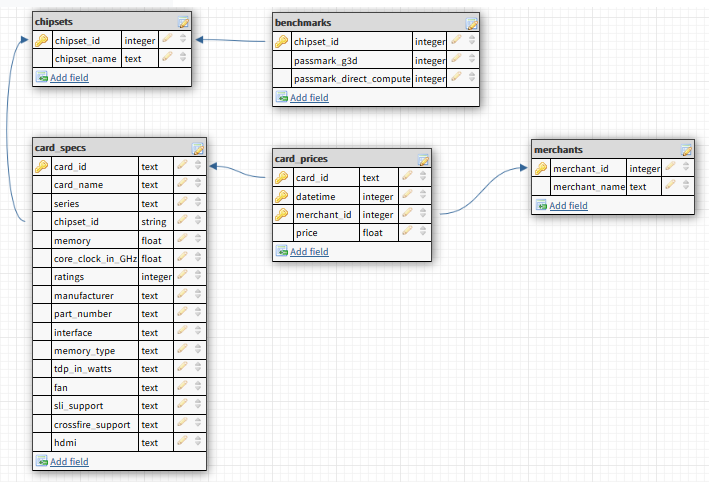

The following is the database schema: